Report: No Foolproof Technique Exists for Identifying AI-Generated Media

A new research report from Microsoft warns that no single technology can dependably identify AI-generated content from authentic media, and that deepening reliance on any one method risks misinforming the general public.

The report, titled “Media Stability and Authentication: Status, Instructions, and Futures,” was produced under Microsoft’s Longer-term AI Safety in Engineering and Research Study (LASER) program and published late last month. Authored by a multidisciplinary team from throughout the company and led by Chief Scientific Officer Eric Horvitz, the study assesses three core technologies utilized to verify digital media: cryptographically protected provenance, invisible watermarking, and soft-hash fingerprinting.

“A top priority worldwide of rising amounts of AI-generated material should be certifying truth itself,” the report states.

The research study identified limitations across each authentication method when used in seclusion. Provenance metadata– the most widely adopted technique, mainly constructed around the Coalition for Material Provenance and Credibility (C2PA) open requirement– can be removed, created, or undermined by regional device executions that lack cloud-level security controls. Watermarks can be removed or reverse-engineered, particularly when embedded on consumer-grade gadgets. Fingerprinting, which utilizes perceptual hashing to match material against known databases, is described as inappropriate for high-confidence public validation due to the danger of hash crashes and the costs of massive database management, according to the report.

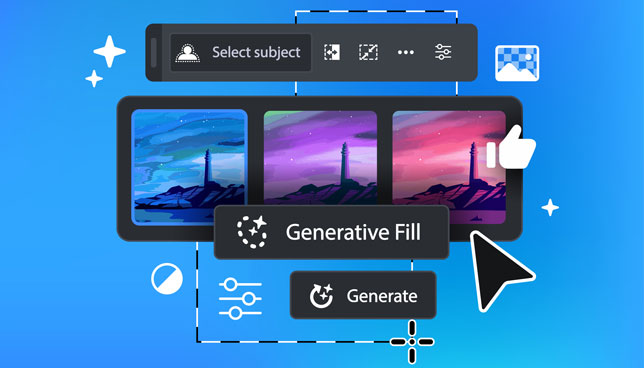

One of the report’s sharper cautions concentrates on what scientists call “reversal attacks.” These attacks flip authentication signals so that genuine content looks AI-generated and AI-generated content looks real. In one circumstance outlined in the research study, an enemy could take a genuine image, make a small AI-assisted edit with a generative fill tool, then connect C2PA qualifications that accurately keep in mind AI participation. Although the initial image was real, the included disclosure might be utilized to call into question it.

The report advises that validation platforms show the general public just results that satisfy a high-confidence threshold. Researchers stated the most trusted technique integrates provenance information with watermarking. If a C2PA manifest exists and successfully verified, or if a discovered watermark links back to a validated manifest in a safe windows registry, the material can be treated as high-confidence authentication.

Hardware security is another significant concern. According to the report, regional and offline systems– consisting of most customer cameras and PC-based finalizing tools– are less safe and secure than cloud-based implementations. Users with administrative control of a gadget might have the ability to modify or bypass the tools that generate provenance data, deteriorating the trust chain.

“General confusion concerning the purpose and constraints of MIA approaches highlights an immediate requirement for education,” the report notes, adding that public expectations must be recalibrated to match what these tools can in fact provide before policy adoption moves forward.

The report likewise reveals issue about AI-based deepfake detectors, which Microsoft’s research study group referred to as a helpful but naturally undependable last line of defense. Proprietary detectors developed by Microsoft’s AI for Great team showed precision in the series of 95% under non-adversarial conditions, but the report cautioned that the “cat-and-mouse” dynamic in between AI generators and detectors indicates no detection tool can be thought about fully reliable. The team kept in mind that high detector self-confidence may in fact enhance the damage caused by false negatives, since trusted outcomes are most likely to go unchallenged.

The findings connect to a more comprehensive set of AI safety developments Microsoft has actually pursued in recent months. The company co-founded an open source AI security initiative along with Google, Nvidia and others. It has actually also broadened Security Copilot with dedicated AI agents designed to automate hazard detection and identity defense across enterprise environments, and warned in a separate analysis that generative AI is accelerating the arms race between assailants and protectors. This latest study includes a brand-new layer of seriousness around provenance facilities specifically, technology that underpins how organizations, reporters, and consumers confirm what is real.

The report contacts generative AI service providers to focus on provenance and watermarking in their systems, on distribution platforms such as social media websites to maintain C2PA manifest data through the upload procedure, and on policymakers to line up legal timelines with what is technically practical.

The full report is available here on the Microsoft site.